Share this

Who Do You Think Won the One Million File Backup Challenge?

by Bridget.Giacinto on Jul 25, 2014 3:18:04 PM

Have you ever wondered why two sets of files of the same cumulative file size can take drastically different amounts of time to backup? It all comes down to the size of the files being backed up. With all things equal in terms of the total cumulative file size, a backup set that contains a large number of small files will take longer to back up than a backup set that contains a smaller number of large files. Why? The primary reason is fragmentation, but additionally, the more files you have, the more resource overhead is required to open the files, read the Metadata, and allocate and record the data of every file. A large portion of disk activity may simply be spent searching for the location of the file, rather than performing the actual backup. With all things equal, it comes down to the efficiency of your backup software, which is what prompted us to do this benchmark study.

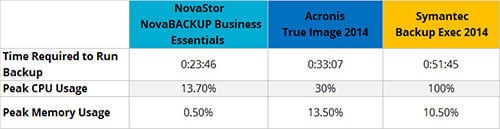

Our quest was to find out how NovaBACKUP stacked up against Acronis and Symantec in a head-to-head test of speed, CPU usage, and peak memory usage when tasked with backing up a million small files to a local disk drive. An internal benchmark study was conducted by NovaBACKUP Engineers to test this specific scenario and to rate the efficiency of our backup software in comparison to our competitors. The results may surprise you.

1,000,000 Small File Backup Challenge

1,000,000 Small File Backup ChallengeResults of the 1,000,000 Small File Backup Challenge:

Why You Want Backup Jobs to Run Quickly

Why You Want Backup Jobs to Run Quickly

The IT System Administrators, or whoever is responsible for backing up your data, will most likely be tasked with ensuring that backups occur in the shortest possible amount of time with minimal impact on the system’s overall performance. This is often done by first establishing a backup window. A backup window is a predetermined time slot allocated to backing up specific data, applications, databases, or systems, which will not operationally disrupt performance. The faster your backups are, the greater the chance that as your file size grows…and it will grow…you will be able to maintain that backup window.

Establishing a backup window is done by monitoring and identifying a system’s peak and non-peak CPU usage. If backups cause high CPU usage, you have no other choice but to schedule backups during non-peak hours, or they will, without a doubt, disrupt performance. The lower the CPU utilization, the more flexibility you have in terms of when you can run your backup jobs.

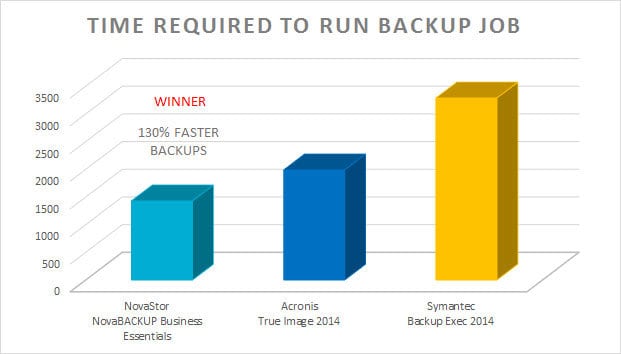

In our one-million small-file backup benchmark study, we looked at how much time it took each backup software program to run the exact same backup job. NovaBACKUP Server Agent (previously Business Essentials) took the least amount of time, which equates to 130% faster than Symantec and almost 40% faster than Acronis.

Why is High CPU Usage an Issue?

If you are experiencing high CPU utilization when running backup jobs, you are likely experiencing sluggish system performance or unexpected errors. When a server's CPU is working at or above 80-90% utilization, applications on your server are likely to experience slowdowns, no response, errors, or even failed backups. If your CPU utilization maxes out at 100% when you run your backup jobs, your server will be forced to free up processing power from other processes, potentially causing server performance issues and failed backup jobs. To verify what process is causing the high CPU usage, you can use the task manager to see the current processes that are running on your Windows system. Press Control + Shift + Esc to access the control manager and then click on the Processes tab. To determine which process is eating up your CPU, click on the header in this window called “CPU” to sort usage by highest CPU usage.

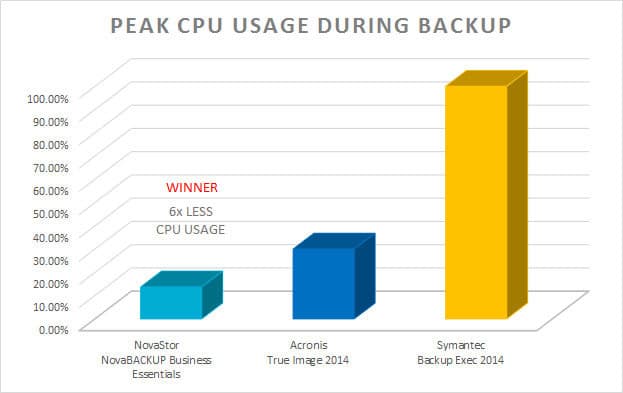

In our one million small file backup benchmark study, NovaBACKUP used 6x less CPU usage than Symantec and 1.2x less than Acronis.

Why You Don’t Want High Memory Usage

Excessive memory usage, even with an average server with 16GB of memory or more, can result in memory shortages. Memory shortages develop when multiple processes are demanding more memory than is currently available, or you are running a poorly written application that leaks memory. If you are running a lot of programs simultaneously, as most of us do, you need backup software that is light on memory usage so that it can run in the background without hogging all of your system's memory.

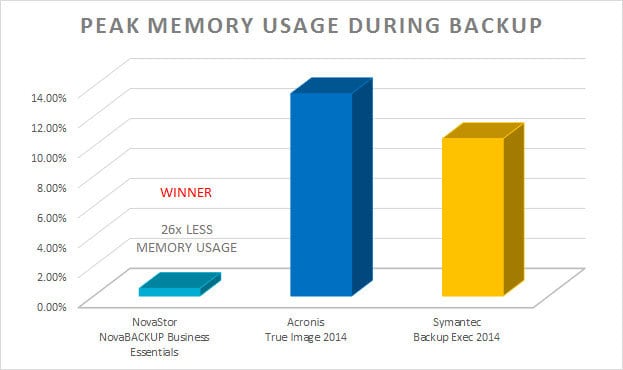

In our one-million small-file backup benchmark study, NovaBACKUP used 26x less peak memory usage than Acronis and 20x less memory usage than Symantec.

Here is the full results breakdown:

NovaBACKUP vs Acronis True Image 2014

- NovaBACKUP is 39% faster in backup speeds than Acronis

- NovaBACKUP uses 1.2x less CPU power than Acronis

- NovaBACKUP uses 26x less memory during backup than Acronis

NovaBACKUP vs Backup Exec 2014

- NovaBACKUP is 130% faster in backup speeds than Symantec

- NovaBACKUP uses 6.3x less CPU power than Symantec

- NovaBACKUP uses 20x less memory during backup than Symantec

The Clear Winner on All Counts: NovaBACKUP

Learn more about NovaBACKUP Server Agent (previously Business Essentials)

Benchmarking Environment:

Windows 7

Intel® Core™ i7-4770 CPU @ 3.4 GHz

Installed memory (RAM): 16 GB

Backup Location: Local Disk Drive

Share this

- Pre-Sales Questions (109)

- Tips and Tricks (100)

- Industry News (56)

- Reseller / MSP (38)

- Best Practices (34)

- Security Threats / Ransomware (29)

- Disaster Recovery (26)

- Applications (25)

- Cloud Backup (24)

- Storage Technology (23)

- Backup Videos (22)

- Compliance / HIPAA (22)

- Virtual Environments (17)

- Technology Updates / Releases (9)

- Infographics (8)

- Backup preparation (5)

- Products (US) (3)

- Company (US) (1)

- Events (1)

- Events (US) (1)

- May 2025 (1)

- April 2025 (2)

- March 2025 (1)

- February 2025 (2)

- January 2025 (2)

- December 2024 (1)

- November 2024 (1)

- October 2024 (1)

- September 2024 (2)

- August 2024 (1)

- July 2024 (2)

- June 2024 (2)

- May 2024 (1)

- April 2024 (2)

- March 2024 (2)

- February 2024 (2)

- January 2024 (1)

- December 2023 (1)

- November 2023 (1)

- October 2023 (1)

- September 2023 (1)

- August 2023 (1)

- July 2023 (1)

- May 2023 (1)

- March 2023 (3)

- February 2023 (2)

- January 2023 (3)

- December 2022 (1)

- November 2022 (2)

- October 2022 (2)

- September 2022 (2)

- August 2022 (1)

- July 2022 (1)

- June 2022 (1)

- April 2022 (1)

- March 2022 (2)

- February 2022 (1)

- January 2022 (1)

- December 2021 (1)

- November 2021 (1)

- September 2021 (1)

- August 2021 (1)

- July 2021 (1)

- June 2021 (1)

- May 2021 (2)

- April 2021 (1)

- March 2021 (2)

- February 2021 (1)

- January 2021 (1)

- December 2020 (1)

- November 2020 (1)

- October 2020 (1)

- September 2020 (4)

- August 2020 (2)

- July 2020 (1)

- June 2020 (1)

- May 2020 (1)

- April 2020 (1)

- March 2020 (3)

- February 2020 (2)

- January 2020 (2)

- December 2019 (1)

- November 2019 (1)

- October 2019 (1)

- August 2019 (1)

- July 2019 (1)

- June 2019 (1)

- April 2019 (1)

- January 2019 (1)

- December 2018 (1)

- November 2018 (2)

- August 2018 (3)

- July 2018 (4)

- June 2018 (2)

- April 2018 (2)

- March 2018 (2)

- February 2018 (2)

- January 2018 (3)

- December 2017 (1)

- September 2017 (1)

- May 2017 (2)

- April 2017 (5)

- March 2017 (4)

- February 2017 (1)

- January 2017 (1)

- December 2016 (1)

- November 2016 (1)

- October 2016 (2)

- September 2016 (1)

- August 2016 (3)

- July 2016 (2)

- June 2016 (2)

- May 2016 (7)

- April 2016 (8)

- March 2016 (1)

- February 2016 (2)

- January 2016 (12)

- December 2015 (7)

- November 2015 (5)

- October 2015 (6)

- September 2015 (1)

- August 2015 (2)

- July 2015 (2)

- June 2015 (2)

- May 2015 (1)

- April 2015 (4)

- March 2015 (3)

- February 2015 (4)

- January 2015 (2)

- October 2014 (4)

- September 2014 (8)

- August 2014 (5)

- July 2014 (7)

- June 2014 (3)

- May 2014 (3)

- April 2014 (9)

- March 2014 (7)

- February 2014 (7)

- January 2014 (5)

- December 2013 (4)

- October 2013 (7)

- September 2013 (2)